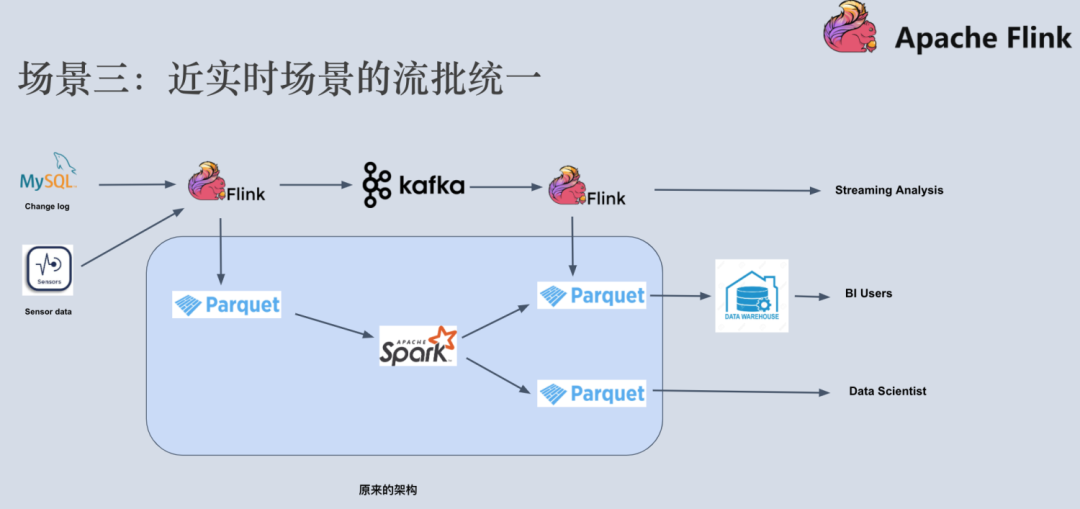

Next exc > : Class .s3a.S3AFileSystem not found Trial-error jar additions to get through the majority of java ClassNotFound exceptions.iceberg-spark-runtime with set metadata-store uri allowed me to make meta data calls like listing database etc.just not coming right.įrom Iceberg's documentation the only dependencies seemed to be iceberg-spark-runtime, without guidelines from a pyspark perspective, but this is basically how far I got: Couple of days further, documentation, google, stack overflow. The purpose is to be able to push-pull large amounts of data stored as an Iceberg datalake (on S3). Metadata tables can be loaded in Spark 2.I'm trying to interact with Iceberg tables stored on S3 via a deployed hive metadata store service. This usually occurs when reading from V1 table, where contains_nan is not populated. contains_nan could return null, which indicates that this information is not available from files' metadata.Fields within partition_summaries column of the manifests table correspond to field_summary structs within manifest list, with the following order:.| path | length | partition_spec_id | added_snapshot_id | added_data_files_count | existing_data_files_count | deleted_data_files_count | partition_summaries | For example, this query will show table history, with the application ID that wrote each snapshot: You can also join snapshots to table history. | committed_at | snapshot_id | parent_id | operation | manifest_list | summary | To show the valid snapshots for a table, run: The example has two snapshots with the same parent, and one is not an ancestor of the current table state. This shows a commit that was rolled back. | made_current_at | snapshot_id | parent_id | is_current_ancestor | as-of-timestamp selects the current snapshot at a timestamp, in milliseconds.snapshot-id selects a specific table snapshot.To select a specific table snapshot or the snapshot at some time, Iceberg supports two Spark read options: For example: a matching catalog will take priority over any namespace resolution. : loads namespace.tablename from the specified catalog.namespace.tablename: loads namespace.tablename from current catalog.catalog.tablename: loads tablename from the specified catalog.

file:/path/to/table: loads a HadoopTable at given path.Variable can take a number of forms as listed below: When using ("iceberg").path(table) or spark.table(table) the table Paths and table names can be loaded with Spark’s DataFrameReader interface. Iceberg 0.11.0 adds multi-catalog support to DataFrameReader in both Spark 3.x and 2.4. To load a table as a DataFrame, use table: Metadata tables, like history and snapshots, can use the Iceberg table name as a namespace.įor example, to read from the files metadata table for prod.db.table, run: SELECT * FROM prod.db.table.files table - catalog: prod, namespace: db, table: table

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed